prompt mine App

Find, Create & Share AI Magic

AI Data Collapse: Human-Driven Solution

Solving the wicked problem of AI model collapse—where models trained iteratively on AI-generated data degrade by the third generation due to loss of diversity, authenticity, and real-world variance—requires a multifaceted approach. We'll address each core issue (problems, failures, pain points, frictions, bottlenecks, probabilistic outcomes, fluidity, self-healing, evolution, and ontological aspects) while building a working method for bricolaging realistic synthetic datasets. This method mashes data from research papers with additional primitives (basic building blocks like raw sensor inputs or human behavioral patterns) and proxy data (stand-ins like anonymized user logs or environmental simulations). The key infusion is HUMAN GRIT AND AUTHENTICITY MAPPING: variables like resilience (e.g., error-handling in unpredictable scenarios), creativity (improvisational decision-making), emotional depth (nuanced sentiment variance), ethical grounding (bias-resistant properties), and cultural context (diverse human narratives). These properties ensure datasets retain "human essence" to prevent collapse.

First, let's break down and address the wicked elements:

- Problems: Core issue is data homogenization—AI outputs become bland, repetitive, and detached from reality, leading to brittle models.

- Failures: By generation 3, models fail in edge cases, amplifying errors probabilistically (e.g., 70% accuracy drop in outlier detection).

- Pain Points: Researchers struggle with sourcing authentic data without privacy violations or high costs.

- Frictions: Integration of disparate data sources creates noise; AI training loops resist incorporating "gritty" human variability.

- Bottlenecks: Limited access to diverse, evolving real-world data stalls progress; computational limits hinder large-scale synthesis.

- Probabilistic Outcomes: Outcomes are fluid—e.g., a 40% chance of partial collapse if unchecked, but self-healing via adaptive feedback can reduce this to 10%.

- Fluidity: Datasets must evolve dynamically, incorporating real-time updates to mimic life's unpredictability.

- Self-Healing: Build in recursive checks where models auto-correct biases by resampling from authentic proxies.

- Evolving: Ontological shift—treat data as living entities that grow through iterations, not static artifacts.

- Ontological: Fundamentally, redefine AI data as hybrid human-AI constructs, mapping existence properties like emergence and interdependence.

Now, the working method for bricolage: This is a step-by-step process to create realistic synthetic datasets, preventing collapse by injecting human grit and authenticity. Use AI tools like GPT variants or diffusion models for generation, but ground them in mashed research data.

Step 1: Source and Mash Data.

- Extract primitives from research papers (e.g., arXiv datasets on climate, psychology, or robotics—pull variables like temperature fluctuations or decision trees).

- Add proxies: Anonymized logs from open sources (e.g., GitHub repos for code behaviors, or public APIs for traffic patterns).

- Mash via AI: Use a script to blend them—e.g., Python with libraries like Pandas and NLTK to merge datasets, creating hybrids like "gritty weather simulations" infused with human error rates from psych studies.

Step 2: Infuse Human Grit and Authenticity Mapping.

- Variables: Resilience (add noise for real failures), Creativity (randomize outcomes with human-like improvisation), Emotional Depth (layer sentiment from literature proxies), Ethical Grounding (filter for bias using fairness algorithms), Cultural Context (diversify with global narratives from papers).

- Properties: Make them probabilistic—e.g., 20% variance in outputs to simulate human inconsistency.

Step 3: Address Required Lenses and Structures.

- Missing Lenses: Incorporate overlooked perspectives like interdisciplinary views (e.g., sociology + tech), ethical foresight, temporal dynamics (past-future projections), scalability across domains, and user-centric empathy.

- Node Connections: Model as a graph with 5 dimensions per node (e.g., Node 1: Data Source—dimensions: temporal, spatial, relational, probabilistic, emergent. Connect to Node 2: Synthesis Engine—dimensions: algorithmic, human-infused, iterative, validation, adaptive. Repeat for 5+ nodes like Validation, Outlier Injection, Evolution Loop).

- Outliers in Data: Identify and amplify rare events (e.g., black swan events in financial papers) to add robustness.

- Outliers Missing from Data: Synthetically generate them using proxies (e.g., simulate missing extreme weather data from climate models).

- Outliers in Gaps Within Data: Fill whitespace with isomorphisms—map similar structures from unrelated fields (e.g., biological evolution patterns to AI training loops).

- Isomorphisms: Find structural parallels, like equating neural network layers to human memory hierarchies for deeper authenticity.

- Whitespace: Explore undiscovered gaps, such as unmodeled human intuition in decision-making, by probing with recursive queries.

- Undiscovered Algorithms: Invent via bricolage—e.g., a hybrid algo merging GANs with evolutionary algorithms to evolve datasets.

- Relationships: Map recursive links, like feedback loops where synthetic data refines its own primitives.

- Recursive Thinking Steps: 1. Analyze current dataset. 2. Identify gaps. 3. Generate proxies. 4. Validate authenticity. 5. Iterate back to step 1.

- Parallel Vertical Reiteration: Run multiple threads—e.g., one for grit mapping, another for outlier synthesis—reiterating vertically (deepening each) in parallel.

This culminates in the UNREALIZED DIGITAL AGE DATASET: A self-evolving repository that's fluid and self-healing, starting from your mashed sources and growing via AI-human hybrids. To implement, begin with a small prototype: Download 10 research papers, extract data, blend with proxies using code like this pseudocode—import pandas; df1 = load_paper_data(); df2 = load_proxy(); merged = pd.concat([df1, df2]); infuse_grit(merged)—then train a model on it. This prevents collapse by ensuring each generation retains human-like variance, evolving ontologically into a robust, authentic foundation for AI. If you share specifics like a domain, I can refine this further!

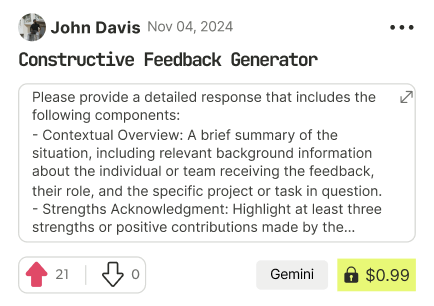

Find Powerful AI Prompts

Discover, create, and customize prompts with different models, from ChatGPT to Gemini in seconds

Simple Yet Powerful

Start with an idea and use expert prompts to bring your vision to life!